Page overview

Choose from a selection of machine learning foundation models

AI is a powerful resource that can be used across a variety of businesses to problem-solve or improve services. But each application will need to utilise different foundation models depending on the purpose. This is where Amazon Bedrock’s API access to multiple LLMs comes in.

Stable Diffusion, created by Stability AI, allows for the generation of unique, realistic, and high-quality images, art, logos, and designs on a large scale, whereas Jurassic-2, developed by AI21 Labs, provides multilingual text generation in Spanish, French, German, Portuguese, Italian and Dutch for business applications.

Amazon Bedrock also provides exclusive access to Amazon’s own FMs – such as Amazon Titan. Amazon Titan FMs are pretrained on large datasets, making them powerful, general-purpose models. Amazon Titan can perform tasks such as text summarisation, text generation (for example, creating a blog post), classification, open-ended Q&A, and support responsible use of AI by reducing inappropriate or harmful content.

AI tools, and the FMs required to use them, are only as good as their data. For an improved experience, AWS users can easily and privately customise Amazon Bedrock FMs with their own proprietary data.

These customised FMs can create a unique customer experience, embodying the company’s voice, style, and services across a wide variety of consumer industries, like banking, travel, and healthcare. For example, a business that needs to auto-generate a daily report for internal circulation using all the relevant activity data can customise the model with proprietary data, including past reports, so that the FM learns how these reports should read and what datasets to report on.

Customised FMs have multiple exciting applications across different industries. Take healthcare, for example. Generative AI could lead to faster drug discovery and more accurate diagnoses. Health technology company Philips has already worked with AWS to develop generative AI applications to improve clinical workflows and diagnostics with imaging technology.

Manufacturers can also benefit from access to Amazon’s ML models, by analysing vast amounts of internet of things (IoT) telemetry to drive predictive maintenance, reduce downtime on production lines, generate new plans and designs for products that are stronger, have a lower impact on the environment, and a lower cost through generative design.

Security has always been a priority for Amazon, and Steve highlights the strong security controls AWS uses when handling sensitive information to train FMs. “Amazon Bedrock provides bar-raising security controls. Customers pass their data on AWS to Bedrock exclusively through the AWS network, and never via public internet. Privacy should be the cornerstone of any enterprise-ready generative AI technology, to ensure a customer’s private data and model customisations do not benefit other companies, including competitors.”

“We're making these foundation models available for people to consume, rather than having to build them for themselves – saving months of potential effort, plus additional development and training costs” explains Steve. “The idea behind Amazon Bedrock is to provide an easy-to-consume, off-the-shelf way of accessing these powerful models and AI tools without the need for any data science knowledge.”

"At AWS, we have played a key role in democratising AI and ML, making it accessible to anyone who wants to use it, including more than 100,000 customers of all sizes and industries"

Swami Sivasubramanian VP, Database, Analytics and ML at AWS

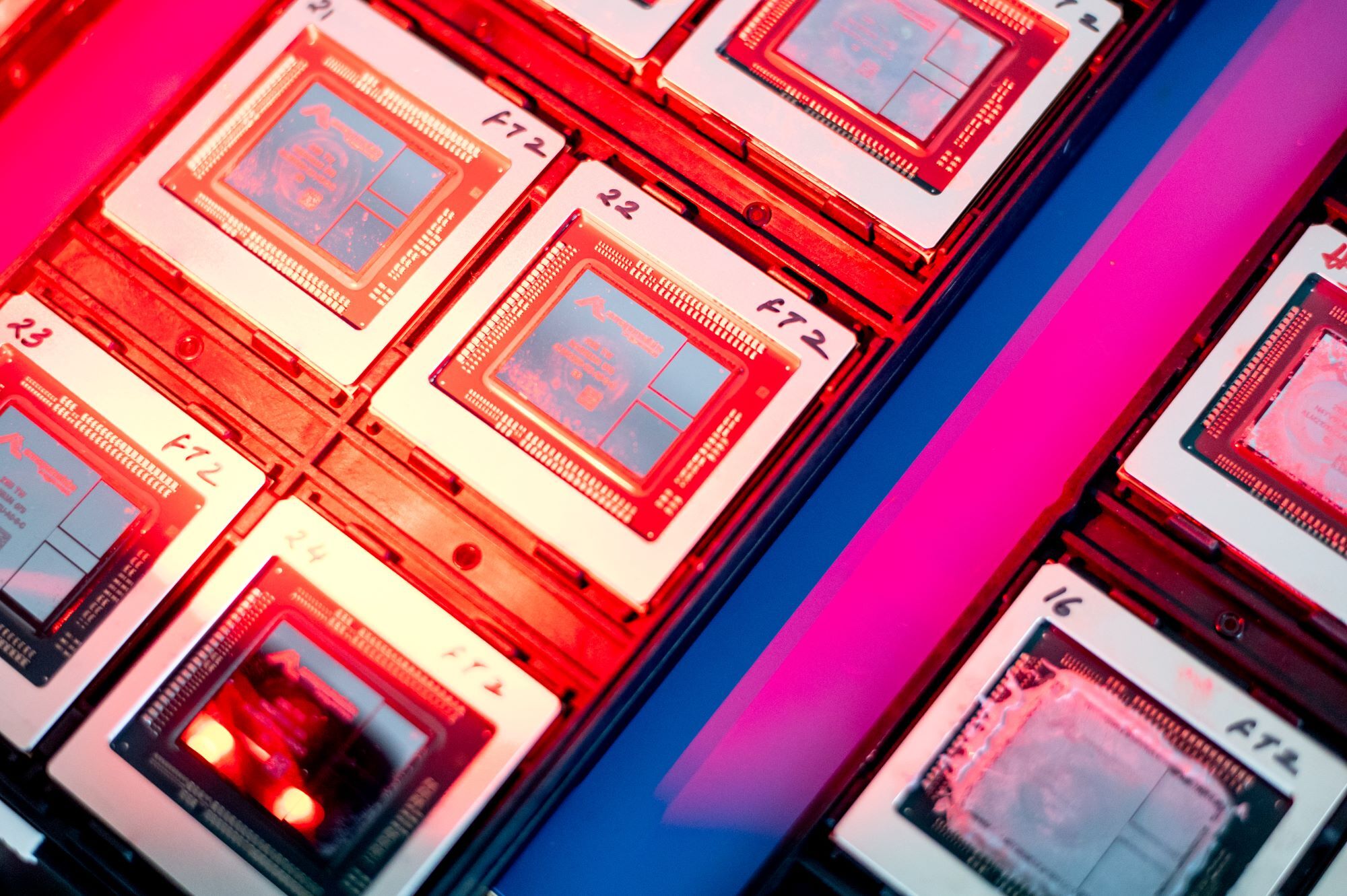

AWS believes in levelling the playing field when it comes to using AI, creating accessibility for startups and businesses to supercharge their work by removing cost and resource barriers. In 2017 the company launched AmazonSageMaker, which helps to open the door to AI and ML tools by providing a fully managed service that empowers everyday developers and scientists to use ML – without any previous experience. AWS also delivers custom-built chips and processors to give customers better price-to-performance for their applications.

“Since day one,” says Steve, “AWS has been focused on making ML accessible to everyone. Our approach to generative AI is to invest and innovate, to take this technology out of the realm of research, and make it available to customers of any size and developers of all skill levels.”

Read more about Amazon Bedrock, or discover 7 new generative AI innovations powered by AWS.